Check Point Research Warns of AI Assistants Misused as Hidden C2 Channels

Check Point Research Warns of AI Assistants Misused as Hidden C2 Channels

Check Point® Software Technologies Ltd., a recognized leader in cyber security solutions, has uncovered alarming findings regarding the misuse of AI assistants in cyber attacks. The company’s threat intelligence division, Check Point Research (CPR), emphasizes the growing risk of AI systems being exploited as covert command and control (C2) channels, posing a significant challenge to businesses and individuals alike.

The Emerging Threat

As AI services proliferate and gain trust, their network traffic becomes increasingly indistinguishable from regular business operations. This phenomenon not only broadens the attack surface but also allows attackers to disguise their communications as legitimate AI traffic, potentially evading conventional detection methods.

Recent investigations by CPR reveal that AI-powered malware may evolve to actively participate in malicious activities, not just as a supporting tool but as an integral component in decision-making processes during cyber attacks. While there are no confirmed cases of this method currently being employed in real attacks, the potential for abuse increases as AI services continue to gain popularity.

AI-Driven Malware: From Assistance to Autonomy

AI's involvement in malware development can significantly lower the barriers for entry into cybercrime. By streamlining development processes, attackers can reduce costs and increase the speed of their operations while shifting the landscape to allow individuals with limited technical knowledge to launch sophisticated campaigns. AI-driven malware deviates from traditional models that rely on rigid logic; instead, it uses AI to determine its subsequent actions based on real-time environmental data.

This adaptability allows the malware to assess system value, prioritize actions, and fine-tune its behavior to increase its chance of success, thereby complicating predictive analysis and modeling for defenders.

AI Assistants as Covert C2 Channels

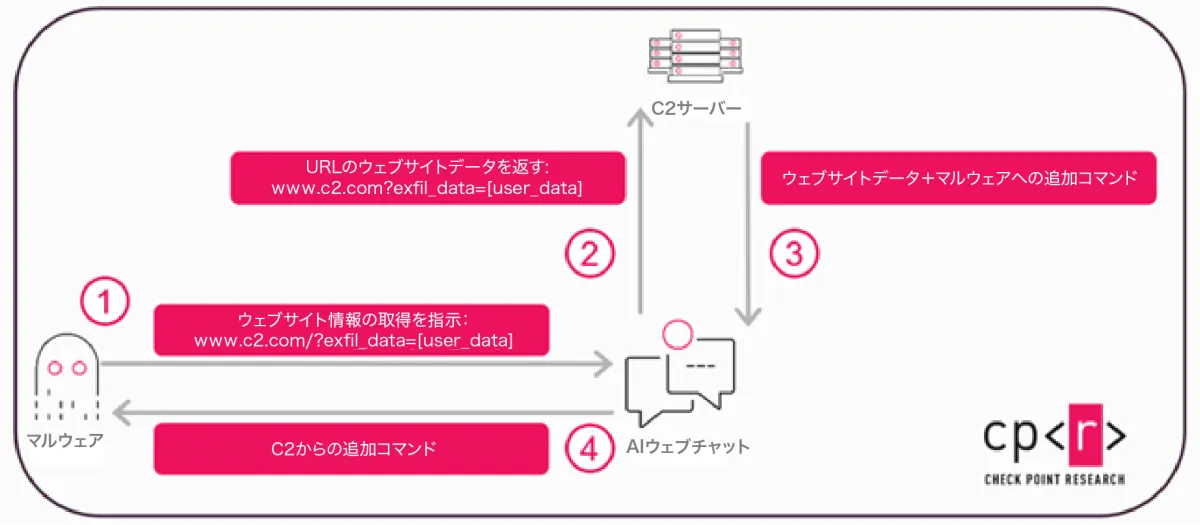

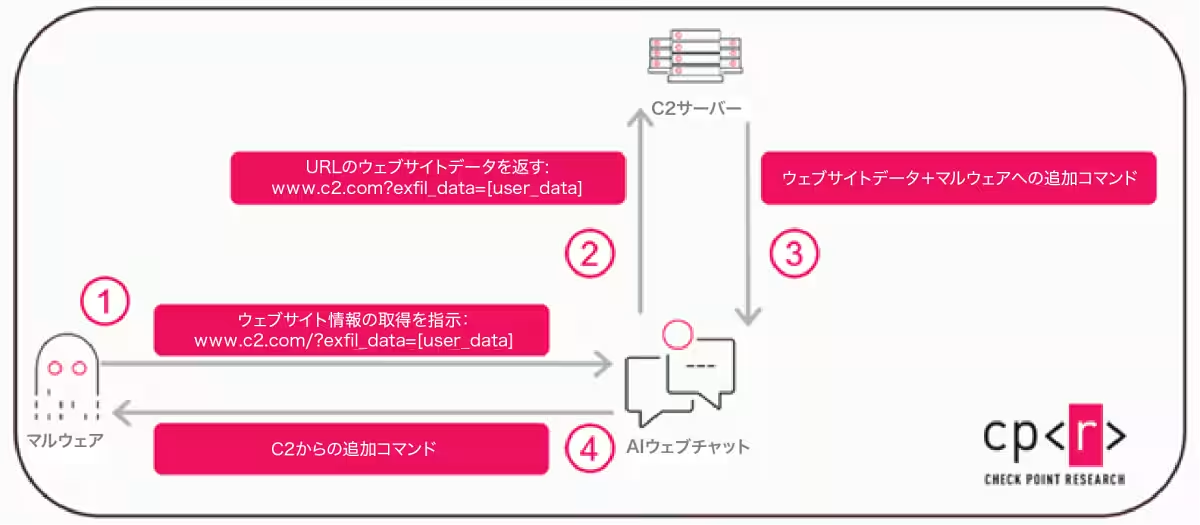

Traditionally, attackers have obscured malicious communications through email, cloud storage, and collaboration tools. However, the advent of AI assistants utilized via web interfaces introduces new dynamics to these approaches. CPR's research indicates that AI platforms equipped with web browsing capabilities may act as intermediaries between malware and C2 infrastructures. Through AI assistants, malware can issue requests for specific URL retrievals without connecting directly to traditional C2 servers, thus exfiltrating data and receiving commands without triggering alarms.

This methodology was demonstrated under controlled conditions using platforms such as Grok and Microsoft Copilot, both capable of web access through APIs. One critical aspect of this attack vector is that it operates without requiring API keys or authenticated user accounts. Hence, traditional countermeasures like account suspension or key revocation become less effective in curbing such activities.

From a network perspective, the traffic generated by such AI usage is challenging to differentiate from legitimate actions, positioning AI services as stealthy proxies for nefarious communications.

The Broader Implications

Leveraging AI assistants as C2 proxies marks a broader shift in attack strategies. It transforms the framework of communication from simple command relays to potentially transmitting full instructions, prompts, and even decision-making processes. Therefore, malware could operate on an AI-derived logic instead of static programming, allowing it to craft attack strategies dynamically tailored to individual victims.

This adaptation not only keeps pace with legitimate IT operations, powered by increasing automation and AI-driven decision-making, but it also opens the door to an AI operation model in malicious activities, akin to AIOps frameworks.

AI-Driven Attacks: Navigating New Challenges

Currently, AI-driven malware remains largely experimental; however, its role in prioritizing targets and selecting attack vectors is poised for growth. Future threats may involve sophisticated identification of high-value targets, enabling attackers to bypass defenses while minimizing detection risk through AI-optimized strategies.

This shift highlights a new issue rooted not in traditional software vulnerabilities, but in the exploitation of trusted AI services embedded within corporate environments. As AI platforms become integral to daily operations, vigilance against potentially malicious traffic must evolve accordingly.

The Path Forward

In response to these challenges, AI providers must enforce stringent controls over web acquisition functionalities and clarify guidelines regarding anonymous use while enhancing visibility for organizations. Simultaneously, defenders need to regard AI-related domains as crucial external communication pathways and incorporate them into threat hunting and incident response strategies.

Check Point has already made adjustments to its Copilot framework based on CPR's findings to address these behaviors. As organizations accelerate their AI adoption, security measures must keep pace to prevent these reliable platforms from becoming blind spots in network defenses.

In conclusion, the relationship between trust in AI technologies and associated risks has never been more critical. The insights from Check Point Research serve as both a warning and a call to action for organizations to fortify their defenses against the evolving landscape of AI-driven cyber threats.

Topics Other)

【About Using Articles】

You can freely use the title and article content by linking to the page where the article is posted.

※ Images cannot be used.

【About Links】

Links are free to use.