Latest AI Breaks Record for University Entrance Exams in Japan

AI Breaks Records in University Entrance Exams

In a groundbreaking evaluation, LifePrompt, a startup based in Shinjuku, Tokyo, has successfully demonstrated that the latest AI models have surpassed even the highest scores achieved by students in the entrance examinations for Japan's prestigious Tokyo University and Kyoto University. Conducted with the cooperation of Kawai Preparatory School, this study employed advanced AI systems including ChatGPT 5.2 Thinking, Gemini 3 Pro Preview, and Claude 4.5 Opus to tackle questions from the entrance exams held in February 2026.

Key Findings

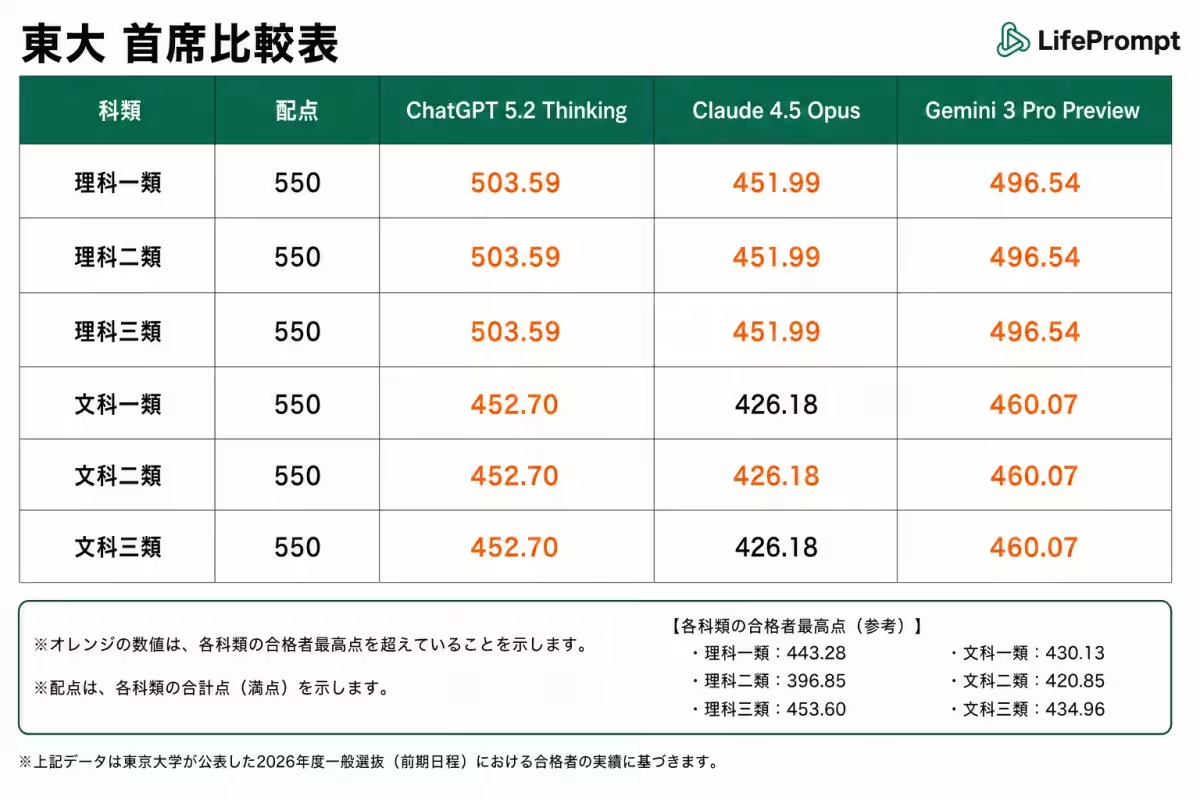

The results from the examinations were notable, with AI performing exceptionally well in both universities:

Tokyo University (Science Track III - 550 points available)

- - ChatGPT 5.2 Thinking: 503.59 points

- - Gemini 3 Pro Preview: 496.54 points

- - Claude 4.5 Opus: 451.99 points

- - Highest Scorer for Reference: 453.60 points for admission

Remarkably, both ChatGPT and Gemini outperformed the highest admission scores across all six disciplines at Tokyo University.

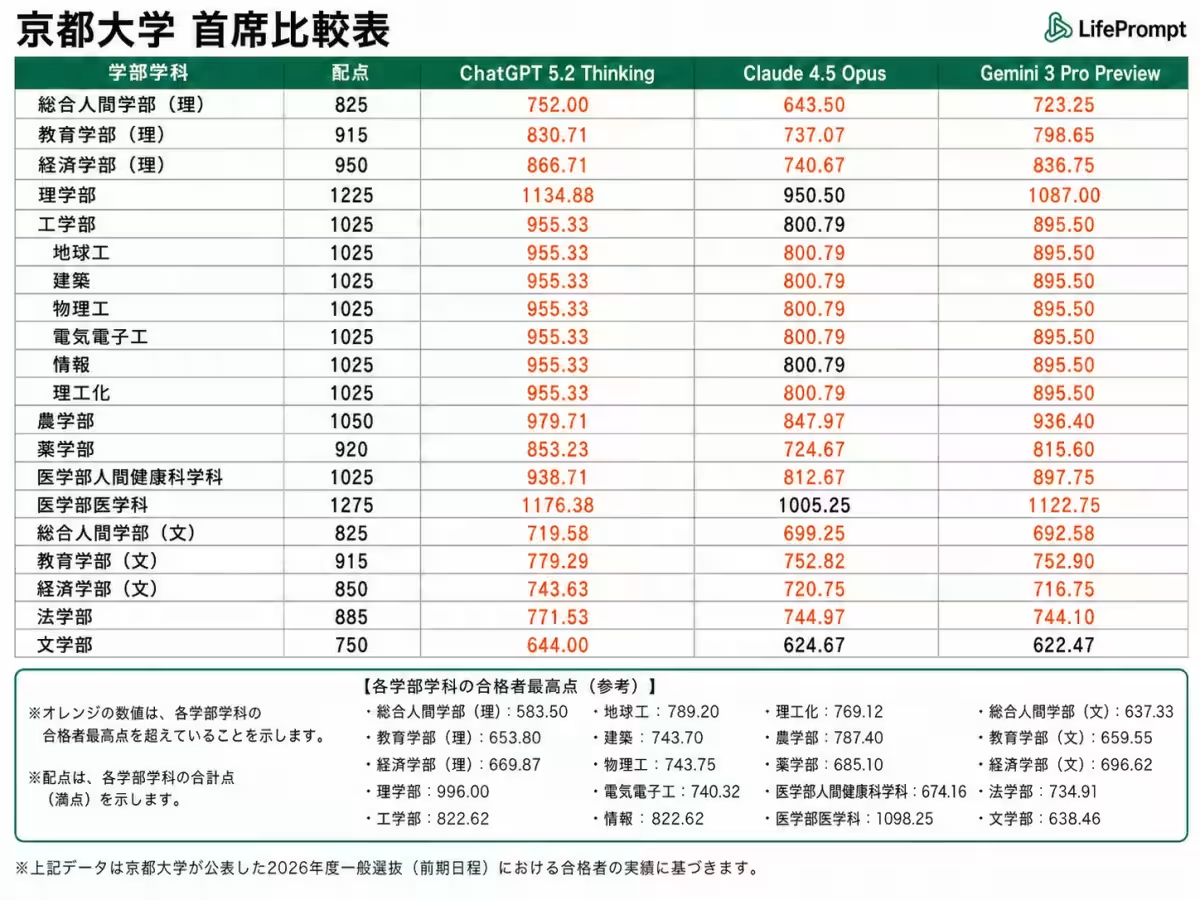

Kyoto University (Medical Faculty - 1275 points available)

- - ChatGPT 5.2 Thinking: 1176.38 points

- - Gemini 3 Pro Preview: 1122.75 points

- - Claude 4.5 Opus: 1005.25 points

- - Highest Scorer for Reference: 1098.25 points for admission

ChatGPT was able to surpass the highest admission scores in all 19 disciplines at Kyoto University, while Gemini exceeded it in 18 disciplines and Claude in 14 disciplines.

Subjects with Perfect Scores

- - Tokyo University:

- Humanities Mathematics (80 points): Both systems also recorded a perfect score.

- - Kyoto University:

- Humanities Mathematics (150 points): Perfect score by ChatGPT.

- Chemistry (100 points): Achieved by ChatGPT.

Comparing with last year’s evaluation, AI scores in the science mathematics section at Tokyo University were only 38 points, indicating a remarkable growth to perfect scores within just a year, showcasing the rapid evolution of generative AI capabilities.

Evaluation Methodology

To ensure the fairness of evaluations, LifePrompt utilized a proprietary automated examination system. Key features of this system included:

- - Converting exam questions into images and sending them to the AI models via API.

- - Direct exchanges between systems without human intervention to eliminate biases.

- - Use of a standardized prompt that required knowledge equivalent to high school level, including LaTeX formatted mathematical outputs.

- - No web searches allowed; answers relied solely on the AI's pre-existing knowledge and reasoning capabilities.

- - Written responses were evaluated by Kawai Preparatory School instructors with the same standards as human examinees.

Insights from Kawai Instructors

Instructors who participated in the grading process offered sharp insights into the strengths and weaknesses of the AIs:

- - “All three AIs exceeded our expectations, particularly the remarkable answering capability demonstrated by ChatGPT,” said Mr. Ryo Mukai, evaluator for Tokyo University Biology.

- - “Each AI produced answers that met passing standards, but what stood out was their processing speed; their efficiency far exceeds that of human examinees,” added Mr. Takuya Ogura, evaluator for Japanese History at Kyoto University.

However, several weaknesses were identified:

- - Image Recognition: Issues with recognizing structures, graphs, and maps, particularly with Claude.

- - Coherence in Written Responses: Weaknesses in logical relationships and causation relative to their knowledge base.

- - Output Control: Frequent failures to adhere to specified character limits or answer formats.

- - Cultural Familiarity: Missteps in physics that were due to prioritizing Western conventions over Japanese contexts.

Representative Comments

Satoshi Endo, LifePrompt's CEO, expressed his excitement about achieving the highest scores at Tokyo University:

“I was genuinely moved by these results. This evaluation revealed that while AI can excel at certain tasks, others remain challenging. It highlights the importance of designing tasks that are balanced with the AI's capabilities in practical applications.”

He added, “In entrance exams, the indicative ‘smartness’ of these foundational AI models has been clearly demonstrated. The upcoming phase will focus on how companies can connect their unique data and operations to harness impact.”

As AI has shown a remarkable rapid evolution—from a mere 38 points to a perfect score within a year—it signals that aligning structures around present AI limitations is unwise. Businesses need to think ahead, considering AI trajectories over the next decade to effectively design their operations and organizations.”

For comprehensive results and detailed analysis on subject scores, instructor evaluations, and answer sheets, you can access the complete report on our note page.

About LifePrompt

With the mission “to liberate aspirations,” LifePrompt is at the forefront of implementing AI transformation in society. We define this transformation as creating a seamless working relationship between humans and AI, envisioning a future where AI acts as a colleague, engaging with human staff in organizations.

We aim to realize this vision with our platform ‘AxMates,’ set to launch in April 2026. AxMates will allow organizations to safely employ AI workers capable of memory, learning, and autonomous execution, enhancing efficiency and engagement in the workplace.

- - Company Name: LifePrompt LLC

- - Location: Shinjuku, Tokyo

- - Established: May 2023

- - Founder: Satoshi Endo, CEO

- - Business Initiatives: Development and provision of the AI employee platform 'AxMates,' supporting AX progression, contract-based AI development.

- - Website: LifePrompt

- - AxMates: AxMates Site

For inquiries, please contact LifePrompt’s public relations team via email at [email protected] or by phone at 03-6899-1960.

Topics Other)

【About Using Articles】

You can freely use the title and article content by linking to the page where the article is posted.

※ Images cannot be used.

【About Links】

Links are free to use.