Ragate Finds 35.2% of Companies Acknowledge AI Hallucination Issues

Addressing AI Hallucination: Insights from Ragate's Study

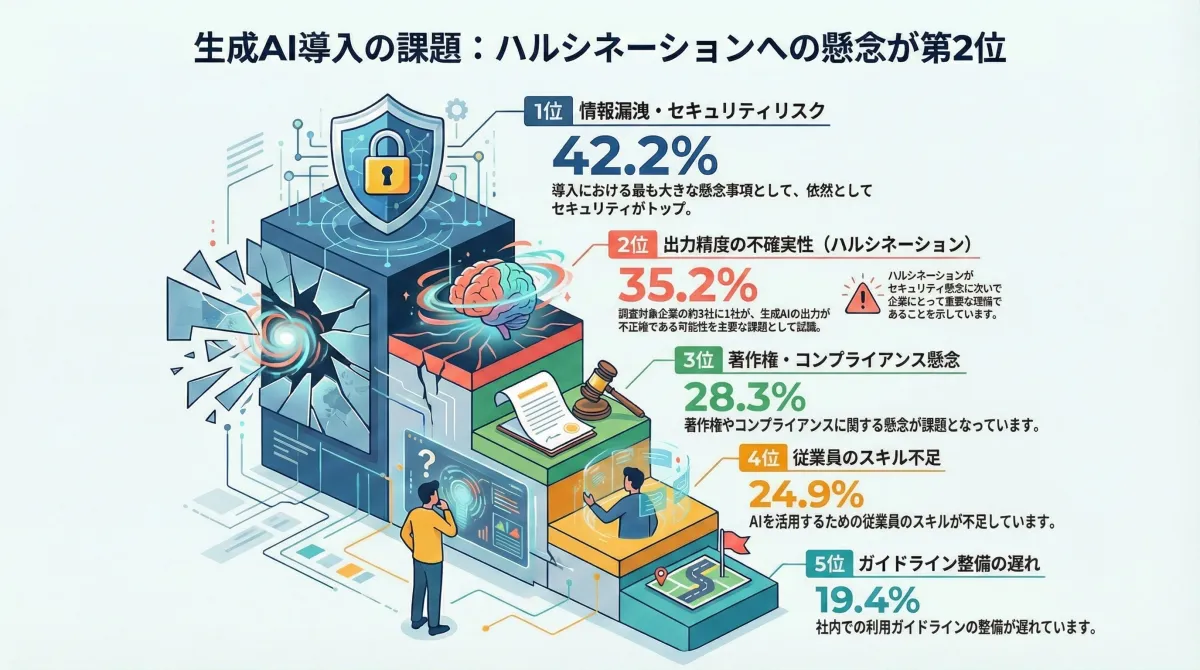

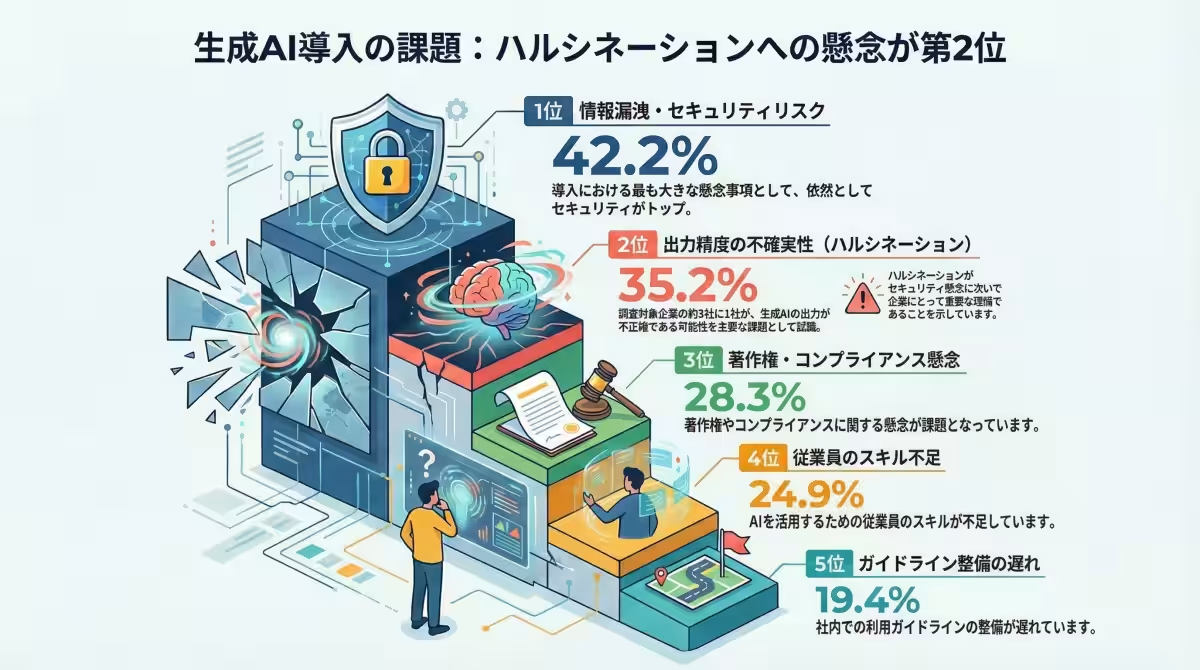

Ragate Inc., a company specializing in AI solutions, conducted a survey in December 2025 among 505 business professionals working in the information systems and digital transformation sectors. The study aimed to delve into the challenges of utilizing generative AI, particularly focusing on issues of output accuracy and the phenomenon known as AI hallucination. The findings were revealing, indicating that 35.2% of the participants view hallucination as a significant problem, ranking it as the second most critical issue after security risks (42.2%). This suggests that AI hallucination is not just a trivial concern but a pressing matter that could hinder the effective use of AI technologies in business operations.

Understanding AI Hallucination

AI hallucination refers to instances when generative AI produces inaccurate or completely fabricated information with confidence. For example, professionals shared experiences of receiving answers from AI that lacked a factual basis, such as incorrect data presented as fact. This issue arises largely because large language models (LLMs), like ChatGPT, are designed to generate plausible text rather than guarantee accuracy in their outputs. Flaws in training data, limitations in context understanding, and failure to incorporate the latest information all contribute to this problem, creating a serious risk in the business decision-making process.

The Survey Findings

1. Recognition of Hallucination as a Challenge: The survey reported that 35.2% of companies perceive the uncertainty in output accuracy (hallucinations) as a major issue. In fact, only security risks ranked higher. This statistic underscores the necessity for organizations to address this problem collaboratively, indicating that it transcends individual user errors and necessitates organizational solutions.

2. High-Risk Areas Identified: The study analyzed the application areas of AI within businesses and their related hallucination risks. The findings revealed that 39.2% of respondents mentioned the fields of information collection, research, and analysis as carrying the highest risk of incorrect decision-making due to hallucinations. This is especially concerning because misleading information can lead directly to financial losses. Other relevant areas include system development and operational management, where flaws introduced by AI-generated code pose additional risks.

3. Effective Mitigation Strategies: To address the issue of AI hallucinations, the most effective approach identified in the survey is the implementation of RAG (Retrieval-Augmented Generation). This technique enhances AI’s output by integrating it with internal knowledge bases and verified information sources. Several supplementary techniques were also highlighted, including:

- Establishing Fact-Checking Systems: Implementing a process that allows for AI outputs to be verified and reviewed before final use.

- Optimizing Prompt Engineering: Designing prompts that guide AI behavior with clear instructions on when to admit uncertainty.

- Choosing Models Based on Use: Selecting models tailored to specific requirements, such as fact verification or cost efficiency.

- Continuous Monitoring and Improvement: Setting KPIs to track hallucination rates and adjustments made during fact-checking.

Ragate's Vision and Future Outlook

Ragate's study reveals that while hallucinations cannot be entirely eliminated, they can be managed within acceptable limits. The observation that 35.2% of companies recognize hallucination as a governance problem reflects a maturing understanding of AI’s role in business. As more organizations integrate generative AI, the management of output quality becomes not just an IT challenge but a core business issue. This trend emphasizes the importance of establishing mechanisms for quality control, especially within sectors that rely heavily on AI for decision-making.

Ragate envisions the democratization of response strategies by combining RAG with no-code tools. Traditionally complex RAG setup can now be achieved with platforms like Dify, in conjunction with Amazon Bedrock for secure hosting on AWS. This development effectively addresses two major challenges faced by companies today: security concerns and hallucination risks.

Going forward, corporate AI utilization will evolve from a mere choice of whether to use it to how to ensure quality and accuracy in its application. The divide between companies that can successfully mitigate hallucinations and those that cannot will likely widen, impacting decision-making accuracy and overall business quality. To support this transition, Ragate offers expertise from their AWS-certified team to assist businesses in developing RAG environments and securing their AI applications.

For companies interested in building reliable AI solutions, Ragate is available for consultations on the practical implementation of RAG and the establishment of trustworthy AI chatbots tailored for internal use.

Learn More

- - Explore Generative AI Development Support Services

- - Discover Support for Achieving AX and Dify Development

This article emphasizes the rising awareness of AI hallucination risks and the growing need for actionable strategies to maintain AI output quality in today's businesses.

Topics Business Technology)

【About Using Articles】

You can freely use the title and article content by linking to the page where the article is posted.

※ Images cannot be used.

【About Links】

Links are free to use.